AppTask is a user-facing task type in CAMS.

Configure AppTasks

Define app task metadata, measures, and triggers in your study protocol.

Run and Track Tasks

Use

AppTaskController and UserTask state/events to drive runtime behavior.UI and Notifications

Render task cards in your app and notify users when tasks are available.

Extend the Model

Add custom

UserTask types and register a UserTaskFactory.End-to-end flow

Define an AppTask in protocol

Configure an

AppTask with task metadata plus one or more background measures.Trigger and enqueue a UserTask

When triggered, CAMS creates a

UserTask and puts it on the task queue in the AppTaskController.Render and execute in app UI

The app listens to the task queue and renders the task list and task for the user in the app, providing callback methods for starting, completing, or canceling tasks.

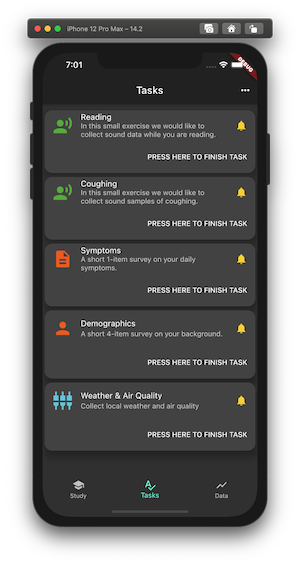

Task List

Task Done

Configuring and using app tasks

App tasks are defined in thestudy_protocol_manager.dart file.

For example, the sensing app task at the bottom of the list is created by this configuration:

BackgroundTask, an AppTask can be configured with user-facing properties:

| Property | Purpose |

|---|---|

type | Task type, for example sensing, survey, or audio. |

title | Short title shown on the task card. |

description | Short descriptive text shown on the task card. |

instructions | More detailed guidance for the user. |

time to complete | Estimated completion time for the task. |

notification | Whether a notification is sent when a new task is available. |

expire | When the task expires and is no longer available. |

NoUserTaskTrigger with an AppTask of type sensing and measures WEATHER and AIR_QUALITY.

When triggered, the task is enqueued and can be shown in the app UI. When the user starts it (for example by pressing “PRESS HERE TO FINISH TASK”), a background task is started and collects the measures, and the app task is marked as done.

For more setup details, see the PulmonaryMonitor.

App task execution

When an AppTask is triggered, it is executed by an AppTaskExecutor:-

Based on the AppTask configuration, a

UserTaskis created. This user task embeds aBackgroundTaskExecutorwhich, later (when the app task is started), is used to collect the measures. -

This user task is enqueued in the

AppTaskController. The AppTaskController is a singleton and is core to the handling of AppTasks, including creating notifications. -

All triggered user tasks are available in the

userTaskQueuefor custom rendering in the app. - The user task has set of call-back methods for marking the task started, done, canceled, expired, all of which are called by the app.

-

When the user tak is started it uses a embedded

BackgroundTaskExecutorto collect the measures. This background data collection is stopped when the task is marked as done or, if one-time measures, when then measures are collected.

The AppTaskController and user task queue

In the PulmonaryMonitor, access to enqueued tasks is handled in sensing_bloc.dart.

Use the userTaskQueue property on AppTaskController to access the queue:

Using user task(s) in the UI of the app

Enqueued user tasks can be rendered in any app-specific UI. In the PulmonaryMonitor app, the task list shown above is implemented in task_list_page.dart. For example, to build the scrollable list view of cards, the followingStreamBuilder is used:

StreamBuilder is used:

PRESS HERE TO FINISH TASK, the user task is started using the userTask.onStart() method. If the task has a widget, that widget is pushed to the UI. For a non-UI sensing task, it starts and runs for 10 seconds.

A UserTask has callback methods that can be called by the app:

| Callback | Purpose |

|---|---|

onStart | Starts the task and starts collecting defined measures. |

onCancel | Cancels the task. |

onDone | Marks the task as done and typically stops measure collection. |

onExpired | Handles expiration and removes the task from the queue. |

onNotification | Called when the user taps the OS notification for this task. |

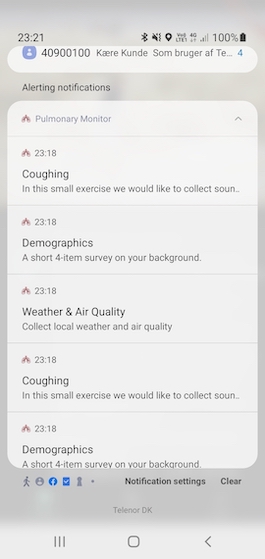

Notifications

An app task can be configured withnotification. If enabled, a local notification is sent with the task title and description.

This notification setup uses

This notification setup uses flutter_local_notifications and requires app-level configuration. See Install and Configure.

Types of app tasks

Currently, CAMS supports different types of AppTasks, all enumerated in theAppTask class:

| Type | Purpose |

|---|---|

SENSING_TYPE | Collects sensing data in a background task. |

SURVEY_TYPE | Starts a survey from the carp_survey_package. |

INFORMED_CONSENT_TYPE | Show an informed consent flow to the user, typically using the research_package |

COGNITIVE_ASSESSMENT_TYPE | Runs a cognitive assessment from the carp_survey_package. |

HEALTH_ASSESSMENT_TYPE | Collects health data via the carp_health_package. |

AUDIO_TYPE, VIDEO_TYPE, IMAGE_TYPE | Collects media data via the carp_audio_package. |

Extending the app task model

CAMS is extensible, so you can add custom app tasks by creating newUserTask types and registering them.

This is similar to extending measures and probes, as described in Extending CAMS.

The AudioUserTask in the Pulmonary Monitor is an example of a custom app task.

Its custom user task implementation is in audio_user_task.dart:

AudioUserTask looks like this:

Remember to register the custom factory in This is typically done during the initialization of CAMS in the app. In PulmonaryMonitor, it happens when the

AppTaskController:Sensing class is created in sensing.dart.